Scaling Our Open-Source Environments Program

Today, we're scaling up our open-source environments program to become the global hub for open evals and RL environments.

As part of this, we're committing hundreds of thousands of $ in grants and looking for partners who want to join our mission to accelerate open superintelligence.

In the past 2 months, we've crowdsourced 400+ frontier RL environments & evals through bounties and our RL residency, which had over 500+ applicants.

Through our new large-scale bounty program we will scale this to thousands of open-source environments.

Browse Open BountiesOver the past few weeks, the community crowdsourced environments across:

- Autonomous AI Research

- Frontier Evals

- Browser Automation

- Theorem Proving

- Subject-Specific QA

- Legal/Finance Tasks

and many more domains...

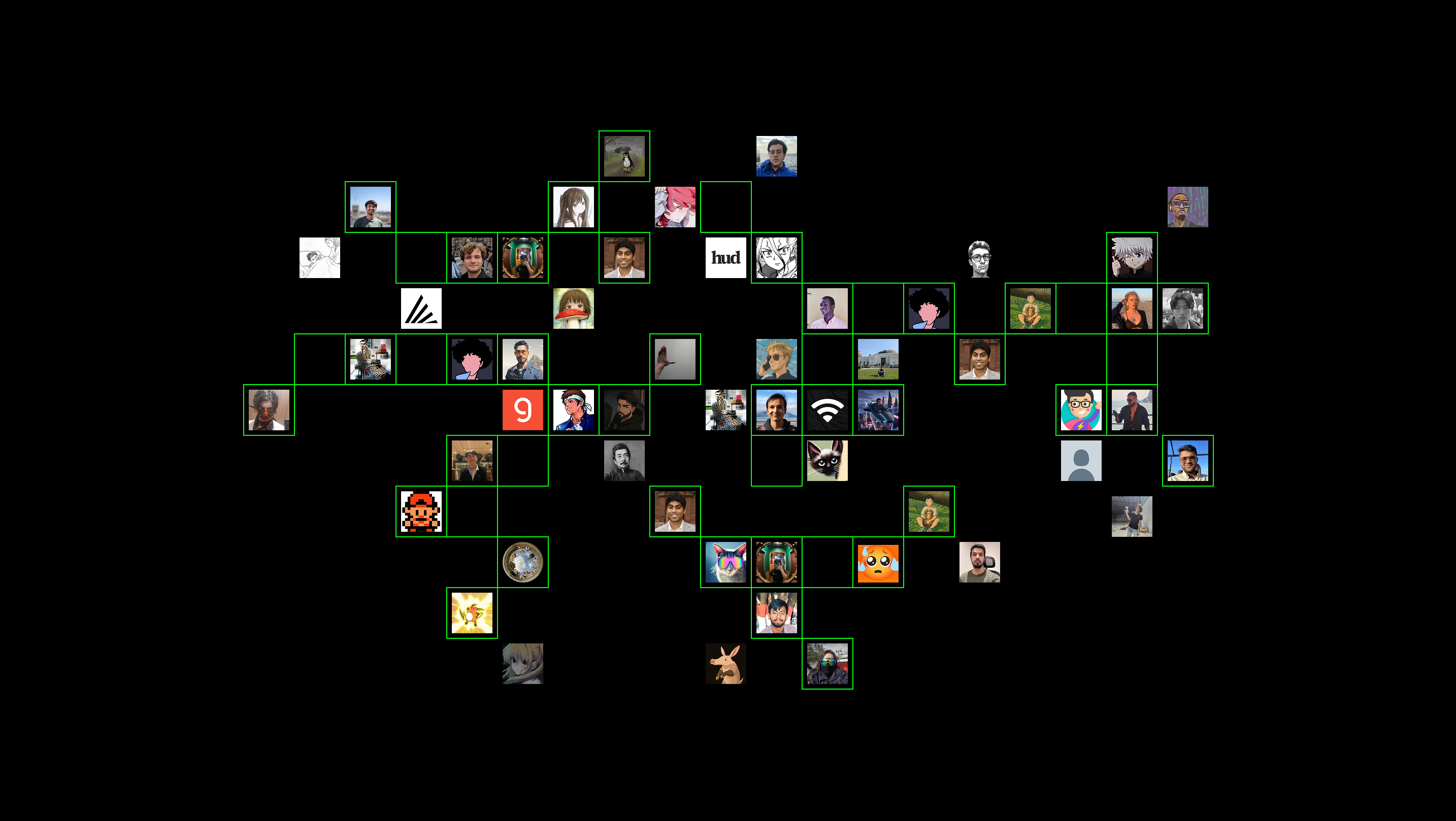

Thank you to all the community members who've contributed an environment or joined the residency so far!

We’ve learned a lot over the past 2 months from our Bounties and RL Residency program — scaling past 400+ environments, with 80+ reviewed implementations and 500+ applications for the residency.

Today, we’re committing hundreds of thousands of $ as grants to sponsor builders around the world to help us grow the Environments Hub into the premier platform for open evals and RL environments.

Many of these grants will be in the form of bounties for completing specific environment implementations, such as popular evaluation suites, paper reproductions, or ecosystem integrations.

Prime Intellect Bounty Program - Environments, Evals, and Open Superintelligence

To scale the Environments Hub while maintaining high quality, the bounty program is split into two categories: Open Access and Application-Only Tasks.

- Open Access tasks can be attempted by anyone, are intended for first-time builders within the Environments Hub ecosystem, and have smaller bounties in the $100-500 range.

- Application-Only tasks are more complex in scope, and have larger bounties ranging from 5000+. They are intended for those who are already comfortable building environments/evals, and will be assigned on an approval basis to builders who apply via this form: https://form.typeform.com/to/jLfT7v7o

Bounties are awarded upon successful completion of the task. For Application-Only tasks involving reimplementation of established benchmarks, this will entail full reproduction of known evaluation scores for a relevant subset of models.

Claim a BountyCall for Partners

We are also opening a Call for Partners for organizations who are eager to sponsor bounties via this program, or who are otherwise interested in participating (e.g. volunteering reviewer time, donating API credits, suggesting tasks for implementation, formalizing validation criteria, etc).

Apply here: https://form.typeform.com/to/DkpaAX0d

Example ideas:

- Sourcing environments for knowledge or skills in a particular domain of interest (e.g. medicine, materials science, systems programming)

- Companies interested in sponsoring environments & evals built around their tools

- Companies or organizations who are training their own models and are seeking custom RL environments.

- Companies who are creating their own evals and interested in spotlighting work samples on the Environments Hub

- Academic researchers who are creating their own novel benchmarks -- we are happy to sponsor evaluation costs for benchmarks whose reference implementation is built with verifiers and released on the Environments Hub.

Bounties sponsored by third-party organizations typically will fall within the Application-Only category, and we will assist in matching your task with capable and compatible builders from our community.

Next Steps

Our goal is to establish the Environments Hub as the global center of gravity for building, sharing and accessing RL environments & evals.

We’re excited to scale the scope, complexity and usefulness of environments on the hub to enable the open-source ecosystem to train increasingly competitive and useful models.

We’ve already been training and evaluating INTELLECT-3 on environments & evals contributed by the community over the last months to scale RL post-training by orders of magnitude of our previous efforts.

Let's open-source the tools to build superintelligence.